*I believe blog posts should be human generated, so AI hasn’t been responsible for this shoddy mess (ahem, technical musings). Note I am not available for any reviews of the latest tools and review only those which I feel like looking into, so please don’t ask!

In my previous post I spoke about a few of the AI based tools I’d been personally experimenting with. Napkin.ai in particular is one time saving app I’ve already returned to since, and expect I will again.

This week, my attention has been drawn to a few other tools, namely:-

- Cursor – an AI first IDE

- Fine.ai – an AI Agent powered tool which sync with your repo and raises tickets for you to improve the code

The Experiment

I thought I’d create a Test Automation Framework from some prompts in Cursor, attempt to add a test and see how long it took to get it working. Then, once it was in Git, pass the repo over to Fine and see if it could be enhanced with further tests, and whether those would actually work once merged back in. See below for link to Git Repo.

The Result

Have a look at the code in my Github repo here:

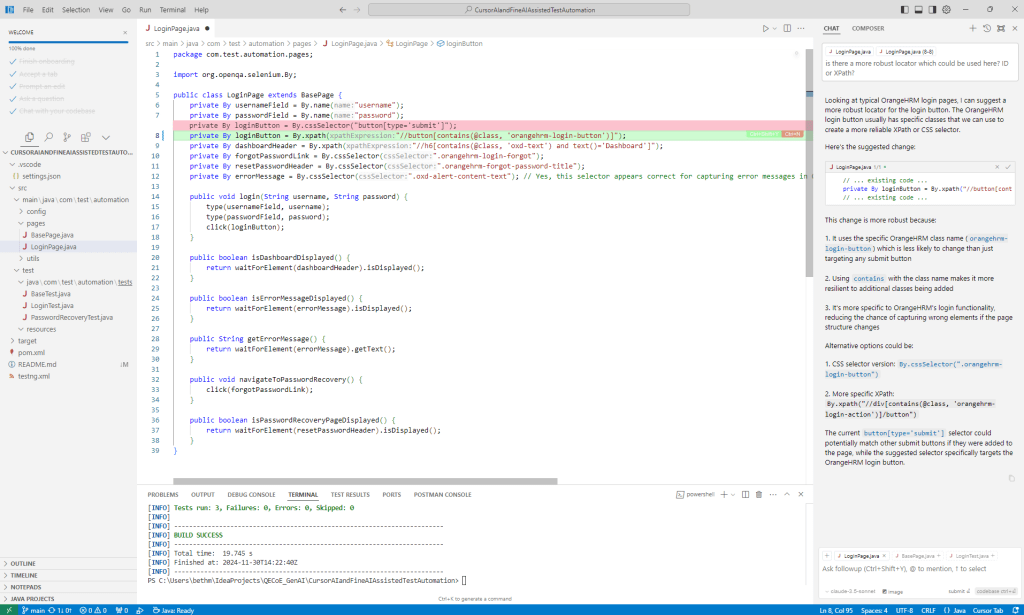

After under 2 hours work (for a relative novice in these sorts of AI generated tools) I was able to spin up a framework which had:-

- Selenium Webdriver

- Selenium Manager – for browser configuration

- TestNG – for Test management

- ExtentListener – for Reporting

- POM – page object model for clean code and structure

- OrangeHRM – application under test

- 3 working test cases

The fine tests were perhaps of lower quality than cursor, which given both had access to the source code this is a little surprising. Things I noticed upon inspection:-

- WebElement locator strategy was not ideal – css chosen by default (this could easily be tweaked with a prompt)

- The test cases looked to make sense, but upon closer inspection I noticed that they were failing because they weren’t asserting the right thing. This is where the human in the loop comes in – AI doesn’t have your experience of the application, so false negatives are a real risk of over relying on the technology here.

I did think the integration between Fine and Git worked really well – PRs in particular were well documented than a lot of human crafted ones I see. Note I still had to ask it to create the PR, and the PR was created in draft form for my review before I could merge it in – all sensible precautions.

- Most things did not work straight out of the box, but I was able to fix the 15 or so issues by querying cursor chat, passing error logs etc. and asking it to fix. The ability to apply the fix immediately was a real time saver, particularly when debugging. Typical things which didn’t work first time:-

- Files not in the right place

- Dependencies missing from pom.xml

- imports missing from test cases

The Verdict

Now if you do a lot of test automation this analogy will make sense – using cursor as an IDE gives you a bit of a leg up from the current offerings in the same way that Playwright does – Selenium is incredible, but you have to do the work of customising it and creating a framework from it yourself (in the same way you need to plugin AI ability and manage it in addition to a standard IDE at present). Whereas Playwright is a framework out of the box, complete with bells and whistles and ease of use features. In this way Cursor is out of the box ready to go. Would I use it again? Yes probably, but some of its features didn’t feel mega intuitive to someone who was familiar using other IDE’s such as Eclipse, Intellij or VSCode. Perhaps that would come in time.

Fine was rate limited as I wasn’t paying for it, and I did find the interface a little tricky to get the hang of – as well as having lots of timeout issues. But I did find its code to be decent, and the integration with GHE would potentially be a good timesaver for teams looking for something to help with some of the grunt work. Leave the creative thinking and the thorny stuff to the real people though (as Fine say themselves when you sign up).

It was very very easy to become over-trusting of both areas though, and potentially get yourself into more of a mess than just doing it yourself. Excited to see where the technology goes next, and happy to be learning more.